| examples | ||

| .gitignore | ||

| archive.py | ||

| index_template.html | ||

| LICENSE | ||

| README.md | ||

| screenshot.png | ||

Bookmark Archiver

Browser Bookmarks (Chrome, Firefox, Safari, IE, Opera), Pocket, Pinboard, Shaarli, Delicious, Instapaper, Unmark.it

(Your own personal Way-Back Machine) DEMO: sweeting.me/pocket

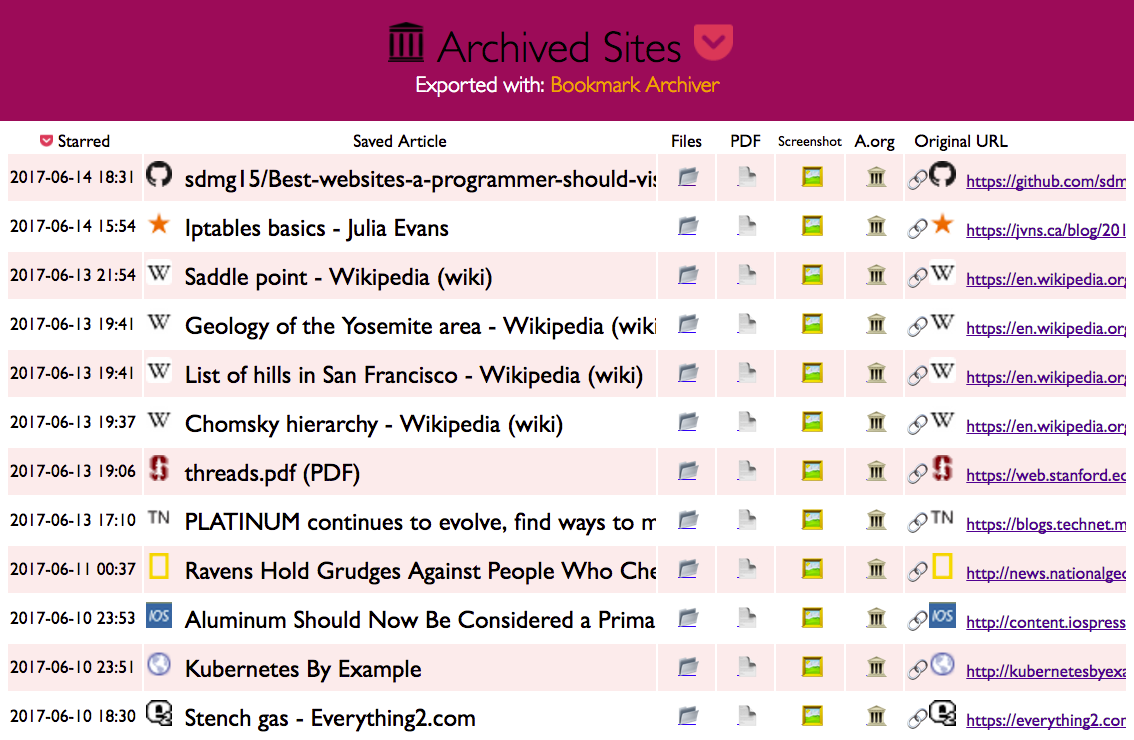

Save an archived copy of all websites you star. Outputs browsable html archives of each site, a PDF, a screenshot, and a link to a copy on archive.org, all indexed in a nice html file.

(Powered by the headless Google Chrome and good 'ol wget.)

NEW: Also submits each link to save on archive.org!

Quickstart

./archive.py bookmark_export.html

archive.py is a script that takes a Pocket-format, Pinboard-format, or Netscape-format bookmark export file, and turns it into a browsable archive that you can store locally or host online.

1. Install dependencies: google-chrome >= 59, wget >= 1.16, python3 >= 3.5 (chromium >= v59 also works well, yay open source!)

# On Mac:

brew install Caskroom/versions/google-chrome-canary wget python3

echo -e '#!/bin/bash\n/Applications/Google\ Chrome\ Canary.app/Contents/MacOS/Google\ Chrome\ Canary "$@"' > /usr/local/bin/google-chrome

chmod +x /usr/local/bin/google-chrome

# On Linux:

wget -q -O - https://dl-ssl.google.com/linux/linux_signing_key.pub | sudo apt-key add -

sudo sh -c 'echo "deb [arch=amd64] http://dl.google.com/linux/chrome/deb/ stable main" >> /etc/apt/sources.list.d/google-chrome.list'

apt update; apt install google-chrome-beta python3 wget

# Check:

google-chrome --version && which wget && which python3 && echo "[√] All dependencies installed."

2. Run the archive script:

- Get your HTML export file from Pocket, Pinboard, Instapaper, Shaarli, Unmark.it, Chrome Bookmarks, Firefox Bookmarks, Safari Bookmarks, Opera Bookmarks, Internet Explorer Bookmarks

- Clone this repo

git clone https://github.com/pirate/bookmark-archiver cd bookmark-archiver/./archive.py ~/Downloads/bookmarks_export.html

You may optionally specify a third argument to archive.py export.html [pocket|pinboard|bookmarks] to enforce the use of a specific link parser.

It produces a folder like pocket/ containing an index.html, and archived copies of all the sites,

organized by starred timestamp. For each sites it saves:

- wget of site, e.g.

en.wikipedia.org/wiki/Example.htmlwith .html appended if not present sreenshot.png1440x900 screenshot of site using headless chromeoutput.pdfPrinted PDF of site using headless chromearchive.org.txtA link to the saved site on archive.org

You can tweak parameters like screenshot size, file paths, timeouts, dependencies, at the top of archive.py.

You can also tweak the outputted html index in index_template.html. It just uses python

format strings (not a proper templating engine like jinja2), which is why the CSS is double-bracketed {{...}}.

Estimated Runtime: I've found it takes about an hour to download 1000 articles, and they'll take up roughly 1GB. Those numbers are from running it single-threaded on my i5 machine with 50mbps down. YMMV. Users have also reported running it with 50k+ bookmarks with success (though it will take more RAM while running).

Troubleshooting:

On some Linux distributions the python3 package might not be recent enough. If this is the case for you, resort to installing a recent enough version manually.

add-apt-repository ppa:fkrull/deadsnakes && apt update && apt install python3.6

If you still need help, the official Python docs are a good place to start.

To switch from Google Chrome to chromium, change the CHROME_BINARY variable at the top of archive.py.

If you're missing wget or curl, simply install them using apt or your package manager of choice.

Live Updating: (coming soon... maybe...)

It's possible to pull links via the pocket API or public pocket RSS feeds instead of downloading an html export.

Once I write a script to do that, we can stick this in cron and have it auto-update on it's own.

For now you just have to download ril_export.html and run archive.py each time it updates. The script

will run fast subsequent times because it only downloads new links that haven't been archived already.

Publishing Your Archive

The archive is suitable for serving on your personal server, you can upload the

archive to /var/www/pocket and allow people to access your saved copies of sites.

Just stick this in your nginx config to properly serve the wget-archived sites:

location /pocket/ {

alias /var/www/pocket/;

index index.html;

autoindex on;

try_files $uri $uri/ $uri.html =404;

}

Make sure you're not running any content as CGI or PHP, you only want to serve static files!

Urls look like: https://sweeting.me/archive/archive/1493350273/en.wikipedia.org/wiki/Dining_philosophers_problem

Info

This is basically an open-source version of Pocket Premium (which you should consider paying for!). I got tired of sites I saved going offline or changing their URLS, so I started archiving a copy of them locally now, similar to The Way-Back Machine provided by archive.org. Self hosting your own archive allows you to save PDFs & Screenshots of dynamic sites in addition to static html, something archive.org doesn't do.

Now I can rest soundly knowing important articles and resources I like wont dissapear off the internet.

My published archive as an example: sweeting.me/pocket.

Security WARNING & Content Disclaimer

Hosting other people's site content has security implications for your domain, make sure you understand the dangers of hosting other people's CSS & JS files on your domain. It's best to put this on a domain of its own to slightly mitigate CSRF attacks.

You may also want to blacklist your archive in your /robots.txt so that search engines dont index

the content on your domain.

Be aware that some sites you archive may not allow you to rehost their content publicly for copyright reasons, it's up to you to host responsibly and respond to takedown requests appropriately.

TODO

- body text extraction using fathom

- auto-tagging based on important extracted words

- audio & video archiving with

youtube-dl - full-text indexing with elasticsearch

- video closed-caption downloading for full-text indexing video content

- automatic text summaries of article with summarization library

- feature image extraction

- http support (from my https-only domain)

- try wgetting dead sites from archive.org (https://github.com/hartator/wayback-machine-downloader)

Links

- Hacker News Discussion

- Reddit r/selfhosted Discussion

- Reddit r/datahoarder Discussion

- https://wallabag.org + https://github.com/wallabag/wallabag

- https://webrecorder.io/

- https://github.com/ikreymer/webarchiveplayer#auto-load-warcs

- Shaarchiver very similar project that archives Firefox, Shaarli, or Delicious bookmarks and all linked media, generating a markdown/HTML index