15 KiB

| type | stage | group | info |

|---|---|---|---|

| reference, concepts | Enablement | Distribution | To determine the technical writer assigned to the Stage/Group associated with this page, see https://about.gitlab.com/handbook/engineering/ux/technical-writing/#designated-technical-writers |

Reference architectures

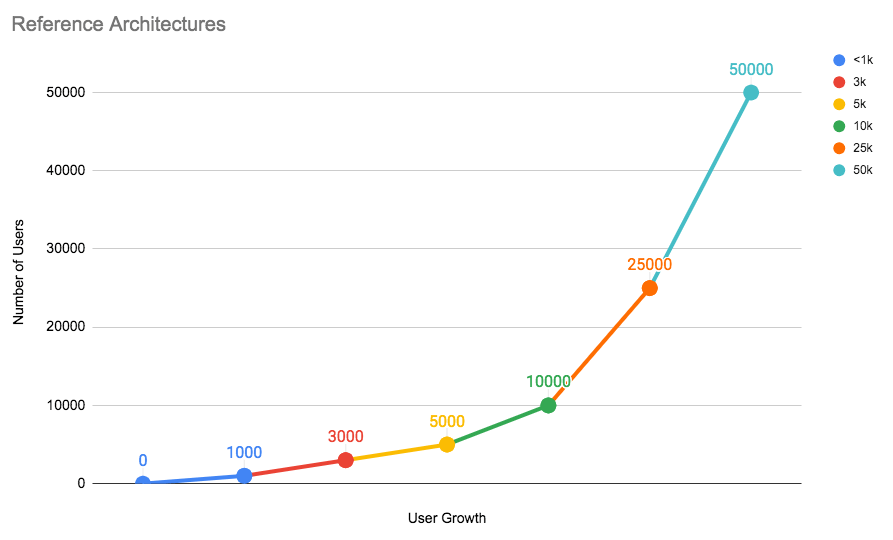

You can set up GitLab on a single server or scale it up to serve many users. This page details the recommended Reference Architectures that were built and verified by GitLab's Quality and Support teams.

Below is a chart representing each architecture tier and the number of users they can handle. As your number of users grow with time, it’s recommended that you scale GitLab accordingly.

Testing on these reference architectures were performed with GitLab's Performance Tool at specific coded workloads, and the throughputs used for testing were calculated based on sample customer data. After selecting the reference architecture that matches your scale, refer to Configure GitLab to Scale to see the components involved, and how to configure them.

Each endpoint type is tested with the following number of requests per second (RPS) per 1,000 users:

- API: 20 RPS

- Web: 2 RPS

- Git: 2 RPS

For GitLab instances with less than 2,000 users, it's recommended that you use the default setup by installing GitLab on a single machine to minimize maintenance and resource costs.

If your organization has more than 2,000 users, the recommendation is to scale GitLab's components to multiple machine nodes. The machine nodes are grouped by components. The addition of these nodes increases the performance and scalability of to your GitLab instance.

When scaling GitLab, there are several factors to consider:

- Multiple application nodes to handle frontend traffic.

- A load balancer is added in front to distribute traffic across the application nodes.

- The application nodes connects to a shared file server and PostgreSQL and Redis services on the backend.

NOTE: Note: Depending on your workflow, the following recommended reference architectures may need to be adapted accordingly. Your workload is influenced by factors including how active your users are, how much automation you use, mirroring, and repository/change size. Additionally the displayed memory values are provided by GCP machine types. For different cloud vendors, attempt to select options that best match the provided architecture.

Available reference architectures

The following reference architectures are available:

- Up to 1,000 users

- Up to 2,000 users

- Up to 3,000 users

- Up to 5,000 users

- Up to 10,000 users

- Up to 25,000 users

- Up to 50,000 users

Availability Components

GitLab comes with the following components for your use, listed from least to most complex:

- Automated backups

- Traffic load balancer

- Zero downtime updates

- Automated database failover

- Instance level replication with GitLab Geo

As you implement these components, begin with a single server and then do backups. Only after completing the first server should you proceed to the next.

Also, not implementing extra servers for GitLab doesn't necessarily mean that you'll have more downtime. Depending on your needs and experience level, single servers can have more actual perceived uptime for your users.

Automated backups (CORE ONLY)

- Level of complexity: Low

- Required domain knowledge: PostgreSQL, GitLab configurations, Git

- Supported tiers: GitLab Core, Starter, Premium, and Ultimate

This solution is appropriate for many teams that have the default GitLab installation. With automatic backups of the GitLab repositories, configuration, and the database, this can be an optimal solution if you don't have strict requirements. Automated backups is the least complex to setup. This provides a point-in-time recovery of a predetermined schedule.

Traffic load balancer (STARTER ONLY)

- Level of complexity: Medium

- Required domain knowledge: HAProxy, shared storage, distributed systems

- Supported tiers: GitLab Starter, Premium, and Ultimate

This requires separating out GitLab into multiple application nodes with an added load balancer. The load balancer will distribute traffic across GitLab application nodes. Meanwhile, each application node connects to a shared file server and database systems on the back end. This way, if one of the application servers fails, the workflow is not interrupted. HAProxy is recommended as the load balancer.

With this added component you have a number of advantages compared to the default installation:

- Increase the number of users.

- Enable zero-downtime upgrades.

- Increase availability.

Zero downtime updates (STARTER ONLY)

- Level of complexity: Medium

- Required domain knowledge: PostgreSQL, HAProxy, shared storage, distributed systems

- Supported tiers: GitLab Starter, Premium, and Ultimate

GitLab supports zero-downtime updates. Single GitLab nodes can be updated with only a few minutes of downtime. To avoid this, we recommend to separate GitLab into several application nodes. As long as at least one of each component is online and capable of handling the instance's usage load, your team's productivity will not be interrupted during the update.

Automated database failover (PREMIUM ONLY)

- Level of complexity: High

- Required domain knowledge: PgBouncer, Repmgr or Patroni, shared storage, distributed systems

- Supported tiers: GitLab Premium and Ultimate

By adding automatic failover for database systems, you can enable higher uptime with additional database nodes. This extends the default database with cluster management and failover policies. PgBouncer in conjunction with Repmgr or Patroni is recommended.

Instance level replication with GitLab Geo (PREMIUM ONLY)

- Level of complexity: Very High

- Required domain knowledge: Storage replication

- Supported tiers: GitLab Premium and Ultimate

GitLab Geo allows you to replicate your GitLab instance to other geographical locations as a read-only fully operational instance that can also be promoted in case of disaster.

Configure GitLab to scale

NOTE: Note: From GitLab 13.0, using NFS for Git repositories is deprecated. In GitLab 14.0, support for NFS for Git repositories is scheduled to be removed. Upgrade to Gitaly Cluster as soon as possible.

The following components are the ones you need to configure in order to scale GitLab. They are listed in the order you'll typically configure them if they are required by your reference architecture of choice.

Most of them are bundled in the GitLab deb/rpm package (called Omnibus GitLab), but depending on your system architecture, you may require some components which are not included in it. If required, those should be configured before setting up components provided by GitLab. Advice on how to select the right solution for your organization is provided in the configuration instructions column.

| Component | Description | Configuration instructions | Bundled with Omnibus GitLab | |-----------|-------------|----------------------------| | Load balancer(s) (6) | Handles load balancing, typically when you have multiple GitLab application services nodes | Load balancer configuration (6) | No | | Object storage service (4) | Recommended store for shared data objects | Object Storage configuration | No | | NFS (5) (7) | Shared disk storage service. Can be used as an alternative Object Storage. Required for GitLab Pages | NFS configuration | No | | Consul (3) | Service discovery and health checks/failover | Consul configuration (PREMIUM ONLY) | Yes | | PostgreSQL | Database | PostgreSQL configuration | Yes | | PgBouncer | Database connection pooler | PgBouncer configuration (PREMIUM ONLY) | Yes | | Repmgr | PostgreSQL cluster management and failover | PostgreSQL and Repmgr configuration | Yes | | Patroni | An alternative PostgreSQL cluster management and failover | PostgreSQL and Patroni configuration | Yes | | Redis (3) | Key/value store for fast data lookup and caching | Redis configuration | Yes | | Redis Sentinel | Redis | Redis Sentinel configuration | Yes | | Gitaly (2) (7) | Provides access to Git repositories | Gitaly configuration | Yes | | Sidekiq | Asynchronous/background jobs | Sidekiq configuration | Yes | | GitLab application services(1) | Puma/Unicorn, Workhorse, GitLab Shell - serves front-end requests (UI, API, Git over HTTP/SSH) | GitLab app scaling configuration | Yes | | Prometheus and Grafana | GitLab environment monitoring | Monitoring node for scaling | Yes |

Configuring select components with Cloud Native Helm

We also provide Helm charts as a Cloud Native installation method for GitLab. For the reference architectures, select components can be set up in this way as an alternative if so desired.

For these kind of setups we support using the charts in an advanced configuration where stateful backend components, such as the database or Gitaly, are run externally - either via Omnibus or reputable third party services. Note that we don't currently support running the stateful components via Helm at large scales.

When designing these environments you should refer to the respective Reference Architecture above for guidance on sizing. Components run via Helm would be similarly scaled to their Omnibus specs, only translated into Kubernetes resources.

For example, if you were to set up a 50k installation with the Rails nodes being run in Helm, then the same amount of resources as given for Omnibus should be given to the Kubernetes cluster with the Rails nodes broken down into a number of smaller Pods across that cluster.

Footnotes

-

In our architectures we run each GitLab Rails node using the Puma webserver and have its number of workers set to 90% of available CPUs along with four threads. For nodes that are running Rails with other components the worker value should be reduced accordingly where we've found 50% achieves a good balance but this is dependent on workload.

-

Gitaly node requirements are dependent on customer data, specifically the number of projects and their sizes. We recommend that each Gitaly node should store no more than 5TB of data and have the number of

gitaly-rubyworkers set to 20% of available CPUs. Additional nodes should be considered in conjunction with a review of expected data size and spread based on the recommendations above. -

Recommended Redis setup differs depending on the size of the architecture. For smaller architectures (less than 3,000 users) a single instance should suffice. For medium sized installs (3,000 - 5,000) we suggest one Redis cluster for all classes and that Redis Sentinel is hosted alongside Consul. For larger architectures (10,000 users or more) we suggest running a separate Redis Cluster for the Cache class and another for the Queues and Shared State classes respectively. We also recommend that you run the Redis Sentinel clusters separately for each Redis Cluster.

-

For data objects such as LFS, Uploads, Artifacts, etc. We recommend an Object Storage service over NFS where possible, due to better performance.

-

NFS can be used as an alternative for object storage but this isn't typically recommended for performance reasons. Note however it is required for GitLab Pages.

-

Our architectures have been tested and validated with HAProxy as the load balancer. Although other load balancers with similar feature sets could also be used, those load balancers have not been validated.

-

We strongly recommend that any Gitaly or NFS nodes be set up with SSD disks over HDD with a throughput of at least 8,000 IOPS for read operations and 2,000 IOPS for write as these components have heavy I/O. These IOPS values are recommended only as a starter as with time they may be adjusted higher or lower depending on the scale of your environment's workload. If you're running the environment on a Cloud provider you may need to refer to their documentation on how configure IOPS correctly.